Let’s get one thing straight: Facebook’s AI moderation is an absolute joke.

I run a professional SEO forum. People ask nuanced, technical questions about link-building, WordPress, website speed, web shops, all kinds of optimization, all kinds of questions from all kinds of users, content clustering, and tools like, Webpagetest, Google Insights, Screaming Frog. Pretty uncontroversial, right?

Apparently not—if you’re Facebook’s prehistoric moderation bot.

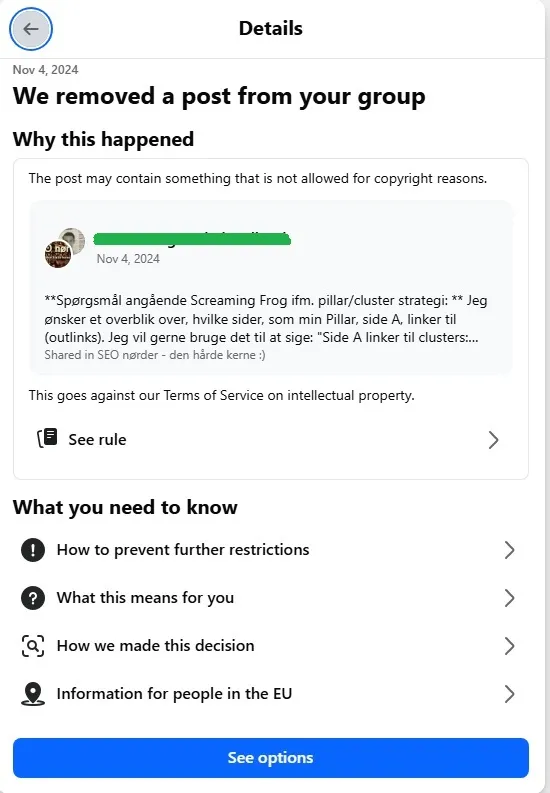

A user recently posted a question about using Screaming Frog in a pillar-cluster SEO strategy. A standard query. No links, no copyrighted content, no gray-hat tactics. And what did the all-knowing Facebook AI do?

It flagged the post as a violation of Intellectual Property policy.

Excuse me, Facebook—what the actual hell?

Where is the violation? Where is the infringement? What part of a link structure discussion screams “theft”? Are we living in a dystopian comedy where basic marketing questions are treated like international cybercrimes?

This is not an isolated event. This is the direct result of Facebook’s absurd delusion that an oversized machine learning algorithm can effectively moderate 3 billion users with context, nuance, and logic. News flash: It can’t. It never could. And every day it continues pretending otherwise is a new layer of digital farce.

AI Moderation: A Fantasy for Lazy Billionaires

Here’s the hard truth: LLMs (large language models) are not intelligent. They don’t understand your post. They don’t “read” your content. They generate output based on statistical patterns from training data. They’re autocomplete on steroids.

Context? Too hard.

Nuance? Good luck.

Subject-matter awareness? Only if your subject is “comparing apples to copyrighted lawsuits.”

Facebook wants us to believe its moderation system is “smart.” But when it nukes an innocent SEO discussion while letting disinformation, hate speech, and shady MLM schemes flourish, the facade crumbles.

Facebook’s AI Fails Because It Was Never Built to Understand — Just to Contain

Let’s be honest: Facebook isn’t using AI to protect anyone. It’s using AI to cut costs.

Human moderation is expensive. AI is cheap. So Meta built a labyrinth of poorly trained bots, slapped the word “intelligent” on them, and hoped we wouldn’t notice the mess. But we do. Constantly.

Every time a creator is banned for quoting their own article.

Every time a harmless meme gets shadowbanned.

Every time a community post is removed for reasons so vague they could double as AI-generated poetry.

Why It Will Keep Happening

LLMs and automated systems are inherently incapable of full contextual comprehension. Here’s why:

-

They don’t know truth from fiction — they only recognize patterns.

-

They don’t understand legality — only correlations between words and past flagged data.

-

They lack domain knowledge — ask one about SEO and you’ll get a content brief, not comprehension.

That’s why talking about link architecture can trigger an “intellectual property” violation. The AI sees the word “cluster,” the word “strategy,” and “Screaming Frog,” and cobbles together some Frankenstein conclusion that you're stealing something.

The Real Risk: Stifling Useful, Legitimate Knowledge

When platforms over-police with blunt-force AI, the result isn’t safety—it’s sterility. Communities dry up. Experts leave. Real value disappears. And all that’s left are spam pages, meme farms, and engagement-chasing outrage posts that the AI somehow always misses.

It’s clear that Facebook doesn’t care about fostering informed communities. It cares about metrics, compliance theater, and automated enforcement that costs them nothing but destroys real conversation.

Dear Meta: Either Hire Real Moderators or Get Out of the Way

Until Facebook acknowledges the catastrophic failure of its moderation model, expect more idiotic removals like this one. Expect legitimate communities to die. Expect your own posts to be flagged for simply using industry terms that an AI can’t comprehend.

And when that happens, don’t be surprised—because you’re not being moderated by intelligence. You’re being moderated by a glorified spellchecker with a badge.

In conclusion:

Facebook, your AI moderation system is not just bad—it’s a public embarrassment.

You’re not protecting users.

You’re not enforcing meaningful policy.

You’re just outsourcing incompetence to a machine and pretending it’s progress.

Wake up. Or get out of the way before your platform becomes a graveyard of silenced experts and AI-induced nonsense.

#StopTheBot #AIIsNotModeration #FacebookFail