Pinterest’s Prehistoric AI Moderation: A Case of One Image, Two Verdicts, and Zero Logic

In an age where artificial intelligence is shaping the digital experience, Pinterest's moderation system appears to be stuck somewhere between the Stone Age and a bad game of "spot the difference." A recent case highlights just how flawed — and frankly absurd — this system can be.

Imagine this: you pin a tasteful, artistic image to one of your boards. Later, you decide it also fits well in another collection, so you save (repin) it to a second board. It’s the exact same image, same source, same context, same everything.

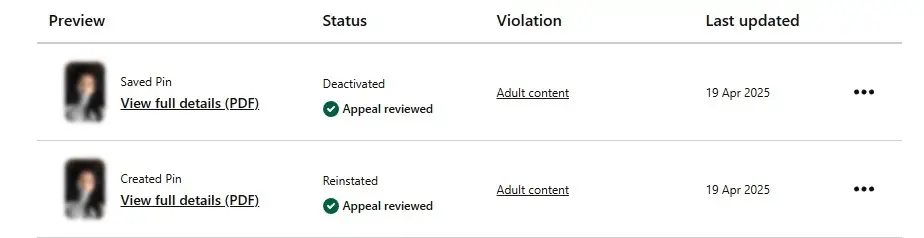

Then one day, Pinterest's AI hammer drops. Both pins are flagged and removed for “Adult Content” — a label as bewildering as it is baseless for an innocent artistic photo. You appeal. Their team — or rather, their automated appeal bot in a human costume — reviews the case. The result?

One pin is reinstated. The other remains deactivated.

Yes, Pinterest’s AI moderation system somehow managed to reach two different conclusions about the exact same piece of content, reviewed at the same time, under the same appeal process. If that sounds broken, it’s because it is.

This is not an isolated case. Pinterest’s AI moderation has a long history of misclassifying art, photography, anatomy studies, and vintage imagery as “Adult Content.” Meanwhile, actual spam accounts promoting explicit material, scams, and clickbait often slip through untouched — thriving in the algorithmic blind spots.

The irony is glaring: artists, educators, and creators are being penalized for sharing legitimate, tasteful content, while the platform claims to be a haven for creativity and inspiration. What Pinterest fails to grasp — or refuses to admit — is that context matters, and AI isn't nearly smart enough to understand it.

AI Without Accountability

Pinterest often boasts about using advanced machine learning to maintain a “safe and positive space.” But in practice, it feels more like being trapped in a Kafkaesque loop of AI errors and rubber-stamped decisions. The system is incapable of understanding nuance, and apparently, even incapable of recognizing that two instances of the same image are, well… the same.

This kind of inconsistency not only frustrates users, it undermines trust. When moderation becomes arbitrary and appeals yield nonsensical outcomes, the message is clear: Pinterest prioritizes automation and liability shielding over fairness and accuracy.

A Call for Transparency — and a Human Touch

If Pinterest wants to maintain its reputation as a visual discovery engine and creative space, it needs to overhaul its moderation approach. That starts with:

Better AI training: recognizing the difference between art and explicit content

Consistency in enforcement: the same image should not be treated differently across boards

Human oversight in appeals: actual humans, not scripts, should evaluate context

Transparency: users deserve a clear explanation when content is removed or reinstated

Until then, Pinterest’s AI will remain not a tool for community safety, but a clumsy gatekeeper — one that bans with one hand and reinstates with the other, without ever explaining why.

Repeated attempts to contact Pinterest for clarification or logic behind the decision led nowhere. No explanation, no accountability — only the usual loop of canned replies, circular links, and silence. What users experience is not support, but digital stonewalling — a wall built from auto-replies, scripted deflection, and dead ends.

And users? We’ll just keep scratching our heads… or taking our creativity elsewhere.