Instagram’s Flawed AI Strikes Again: Another Grotesque and Insane Verdict

Artificial Intelligence (AI) has been heralded as the future of digital moderation, promising efficiency, fairness, and consistency. However, reality paints a far darker picture. Instagram, one of the world's most influential social media platforms, recently provided yet another baffling example of AI gone wrong—an error so grotesque and irrational that it borders on the absurd. The case in question? A simple, artistic image of a girl lying on the forest floor, accompanied by poetic text, was flagged and removed under the accusation of spam. This incident, like many before it, exposes the deep flaws in Instagram’s AI moderation system and raises serious questions about whether AI is even capable of handling the immense complexity of content moderation at scale.

AI’s Fundamental Shortcoming: Task Overload and the Breakdown of Logic

AI thrives in controlled environments where it is assigned specific, repetitive tasks. For example, facial recognition algorithms can quickly and effectively match known patterns, while translation engines can convert text between languages with growing accuracy. However, when AI is tasked with moderating an entire platform like Instagram—a platform hosting an almost infinite variety of content—it faces an insurmountable challenge.

Moderation isn’t a simple, uniform task. It involves analyzing everything from violent content, nudity, and hate speech to misinformation, spam, and copyright infringement. Each of these categories requires nuanced understanding and contextual decision-making—something AI fundamentally struggles with. The sheer volume of posts means Instagram's AI is under immense pressure, processing millions of posts every hour. Like an overloaded human brain under extreme stress, it starts to break down, producing unpredictable, irrational, and often faulty verdicts.

It is not the amount of tasks that makes the AI break down. This can simply be solved by adding more servers to the task in the data center. It is the great variety of tasks that causes the AI to become stressed and fail.

A Harmless Image, A Baseless Verdict

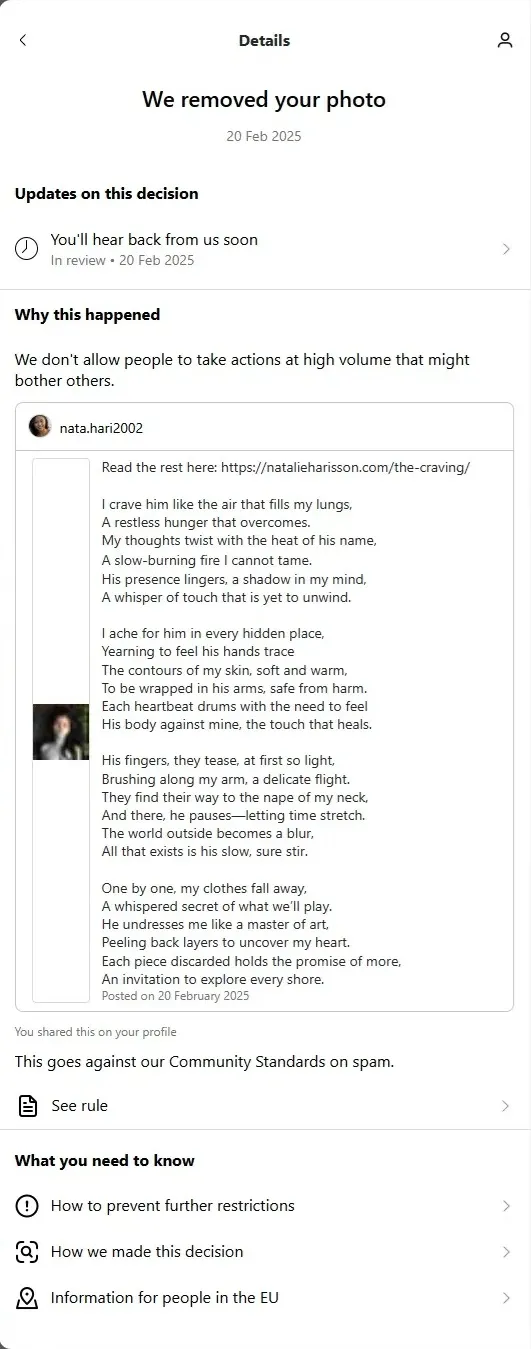

The image in question—a serene, artistic depiction of a girl lying in the forest—was nothing remotely offensive. It complied with Instagram’s community guidelines, and the accompanying text was merely a poetic excerpt. Yet, Instagram’s AI deemed this completely innocuous content as “spam.” How does this happen? AI’s moderation decisions are based on patterns, keywords, and previous reports. When overwhelmed, it begins making sweeping generalizations and absurd misinterpretations.

This is not an isolated case. Artists, photographers, and even everyday users have long been victims of Instagram’s broken AI moderation. Innocuous art is flagged as pornography, benign discussions are removed for alleged hate speech, and genuine accounts are taken down for spam while actual spam bots run rampant. This raises the pressing question: how does Instagram expect an overworked AI to fairly judge an ever-evolving landscape of human creativity and communication?

Here is the image:

And the Poem attached to the image:

Read the rest here: https:\\natalieharisson.com/the-craving

I crave him like the air that fills my lungs,

A restless hunger that overcomes.

My thoughts twist with the heat of his name,

A slow-burning fire I cannot tame.

His presence lingers, a shadow in my mind,

A whisper of touch that is yet to unwind.

I ache for him in every hidden place,

Yearning to feel his hands trace

The contours of my skin, soft and warm,

To be wrapped in his arms, safe from harm.

Each heartbeat drums with the need to feel

His body against mine, the touch that heals.

His fingers, they tease, at first so light,

Brushing along my arm, a delicate flight.

They find their way to the nape of my neck,

And there, he pauses—letting time stretch.

The world outside becomes a blur,

All that exists is his slow, sure stir.

One by one, my clothes fall away,

A whispered secret of what we’ll play.

He undresses me like a master of art,

Peeling back layers to uncover my heart.

Each piece discarded holds the promise of more,

An invitation to explore every shore.

AI’s Pattern Recognition: A Double-Edged Sword

AI isn’t actually “intelligent” in the way humans understand intelligence. It recognizes patterns based on previous data and statistical probabilities. While this works well in applications like fraud detection, it fails spectacularly when applied to the infinitely varied world of social media.

Consider the possible logic behind the removal of this forest-floor image:

- Keyword Association: If the accompanying poem contained words commonly used in spam messages (such as “free,” “win,” or “exclusive”), the AI may have falsely flagged it as spam, even if the words were used in a poetic, unrelated context.

- Similarity to Prior Removals: If Instagram has mistakenly removed similar images in the past (perhaps dark-toned images or reclining figures misidentified as something inappropriate), its AI may have learned to wrongly associate such visuals with a violation.

- Overreaction to User Reports: If even a small number of users mistakenly or maliciously reported the post, Instagram’s AI, rather than investigating properly, could have blindly executed an automatic takedown.

The problem? AI lacks the ability to critically analyze whether the patterns it detects are valid in the context of a specific case. Instead, it applies sweeping generalizations—leading to the absurd removal of entirely legitimate content.

The Dangerous Consequences of AI Overreach

The consequences of AI’s flawed decision-making extend far beyond individual frustrations. For artists, creators, and businesses, these errors can be devastating. A wrongful takedown means lost engagement, damaged reputations, and, in extreme cases, the permanent deletion of an account. Instagram’s appeal process is notoriously opaque and ineffective, often leaving users with no recourse.

Moreover, AI’s flawed moderation can lead to the suppression of important social and political content. Activists and journalists have reported censorship of their posts, not because they violate guidelines, but because AI mistakenly flags sensitive topics as harmful or inappropriate. Meanwhile, actual harmful content, such as violent extremism and misinformation, often slips through the cracks, as AI lacks the nuanced understanding to differentiate between harmful intent and legitimate discussion.

The False Promise of AI Moderation

Tech companies like Instagram often defend their reliance on AI by arguing that human moderation is simply too slow and expensive at scale. However, this justification is deeply flawed. A system that enforces rules unfairly, unpredictably, and without accountability is worse than an imperfect human-led system.

Instead of relying solely on AI, Instagram should:

- Invest in more human oversight to review AI decisions and provide nuanced judgment where necessary.

- Improve transparency around how AI moderation decisions are made and offer clearer appeal processes for wrongful removals.

- Develop better hybrid models where AI serves as a first filter, but humans make the final judgment on more complex cases.

Conclusion: A Broken System in Desperate Need of Fixing

Instagram’s latest AI blunder—wrongfully flagging a completely harmless image as spam—once again highlights the dangers of over-reliance on artificial intelligence for content moderation. AI is excellent at handling repetitive, uniform tasks, but when thrown into the chaos of social media, it functions like an overloaded brain under extreme stress, producing nonsensical results.

Until Instagram acknowledges these fundamental flaws and commits to meaningful reform, users will continue to suffer from arbitrary and grotesque AI verdicts. The time has come for tech giants to stop hiding behind AI as an excuse for poor moderation and start investing in systems that truly respect human creativity, expression, and fairness.